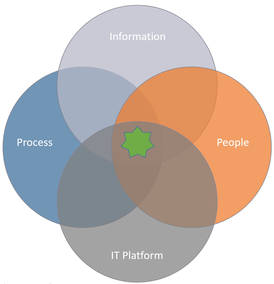

So it’s about information, processes, people and an IT platform, in this case a PLM platform.

To be successful, ALL areas must intersect.

Just as little as it will help to have good quality data with perfectly defined processes and an organization ready to adopt it if the PLM platform is unable to scale to your needs.

It will not help to have good quality data tied to perfectly defined processes and a state of the art PLM system either if nobody is using it….

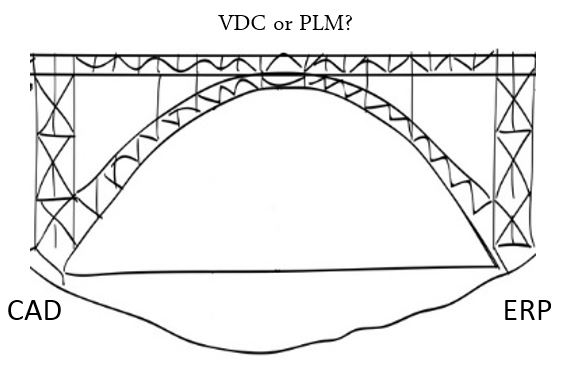

So going back to the headline: PLM platforms, the difficult organizational rollout.

I’ve seen far too many PLM implementations underperform due to unsuccessful rollout in the organization.

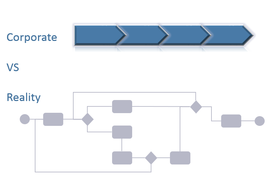

I find it strange that although the projects are often run iteratively to develop or customize smaller chunks of functionality in each iteration to ensure success, one expects the end users to devour the full elephant of the project in more or less one big bite…

In my view a rollout of such a large and business critical platform should also be considered iterative and with time for the end users to come to terms with what they have learned after each iteration before the next iteration starts.

I would compare it to building a house.

You would never start erecting the walls before the concrete slab is sufficiently cured.

The same is true for an organization. If more functionality and new processes are put on top before the previously learned functionality and processes has had time to settle, you get resistance, and the foundation becomes weak.

Another important factor is to not only train the end users in a classroom environment and then expect them to perform well in their new system… Because they won’t.

They’re still afraid to do something wrong, and they will struggle to remember what they learned in the classroom.

Then they will try to find solutions in the manuals, and growing more and more frustrated by the minute.

If this frustration is allowed to continue for too long, you can be sure that the end result is that they feel that the system is too difficult to use and basically suck. It might sound childish, but holding hands work! Have some super users or trainers available in the everyday work situation to help and guide the users the first few weeks.

That will mitigate the fear factor of doing something wrong, and steadily build confidence and ability.

Bjorn Fidjeland

RSS Feed

RSS Feed